Digital animation tool for visualizing

realistic hand movement.

The basic idea arose from the collaboration between artificial intelligence and creative users. Together with a fellow student, I investigated how artificial intelligence can support us in creative processes. Furthermore, we focused our bachelor thesis on the area of animation.

Animations are helpful in conveying knowledge to the viewer quickly and easily. They can cause Emotions, portray facts and give a sense of dynamic. However the animation itself is a very complex and time-consuming process. Creating movement in particular can be difficult. Even small discrepancies can be enough for the viewer to lose the illusion of liveliness. The same is true when creating hand gestures. Anyone who has had to draw a hand knows how difficult it is to remain anatomical correctness. Imagine now how difficult it must be to keep this correctness in an Animation. This is exactly where we wanted to start and develop Hanmo.

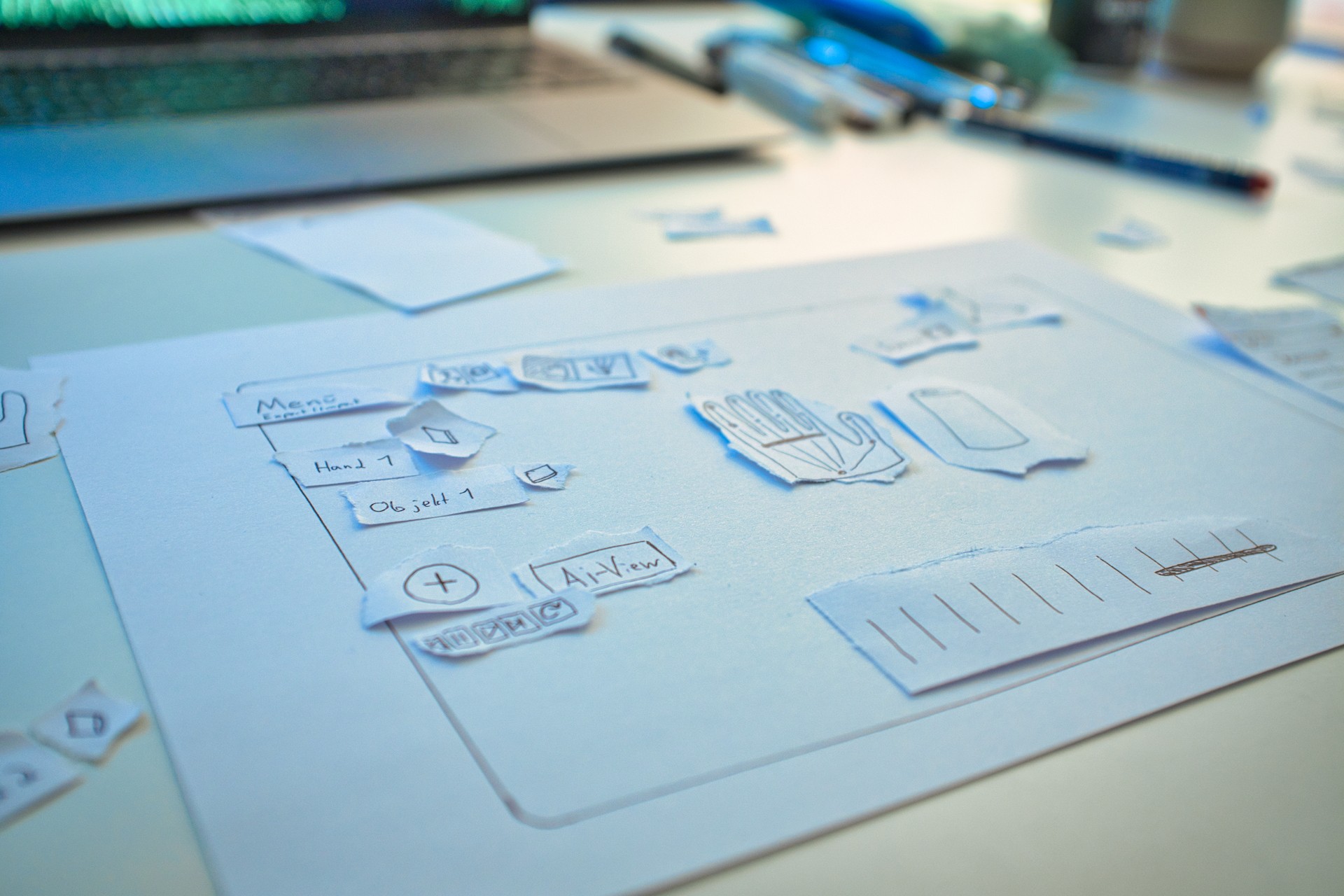

Concept

Hanmo is a software for animators that helps them create realistic and anatomically correct hand movements. It uses artificial Intelligence. In this way, the user achieves a realistic result without requiring numerous iterations or detailed knowledge of anatomical movement. The animator uses the timeline to define a rough sequence of movements for the respective hands, which is the visualized by the AI.

The Hands can be told to interact with objects or other hands. All movements can be adjusted to convey dynamics and emotion. In order to rule out anatomical errors on the part of the user, he isn’t able to make precise adjustments of the movement. This creates an excellent balance between control and freedom, which gives Hanmo an exiting and varied character in use.

Inovation

Hanmo differs significantly from conventional animation tools through the use of an AI.

The main question is how to tell the AI what you want to get as an end result. In the case of Hanmo, we use motion-presets in a sequence. These presets where previously learned by the AI. This ensures that the details and micro-movements of the hand can be realistically recreated.

The presets are divided into 4 main areas.

• General movement in space

• Interaction with an object

• Interaction with another hand

• Interaction with itself

Each press has different settings. In the [Gripping] preset, for example, the user has the option of defining what type of gripping the AI uses to interact with an object.

The special feature here is that the user can limit the area on the object, in wich the AI can grip. For example on a coffee mug the user can limit the area on the handle only, so that the AI isn’t able to grab the entire mug.

The chained movement presets are then automatically linked and animated by the AI. The user is able to make small adjustments to the movement to get the wanted result.

Hanmo offers the possibility to export the created animation as motion capture data. Therefor the user is able to import the movement in 3D Animation programs and load it on to a character model. This means the animator doesn’t have to buy expensive motion equipment and can still create realistic hand movement. In addition, the software can be integrated into already existing animation workflows.